I Keep Getting Asked the Same Question

When someone creates their first AI video on UGCAds, the most common reaction after the video generates is some version of: "Wait, how did it do that?" Not in a skeptical way — in a genuinely curious way. The gap between "type a script and pick an avatar" and "finished video with lip-synced audio" is large enough that the mechanism is not obvious.

I am not an AI researcher. But I have spent a lot of time talking to people who are, and I have spent even more time watching how these tools evolve. What follows is the clearest non-technical explanation I can give of how AI video generation works — what is actually happening under the hood when these models produce video.

Start Here: What AI Video Generation Is Not

It helps to start by clearing up what AI video generation is not, because there are two common misconceptions.

It is not video editing. Traditional video production involves capturing real footage and then editing it. AI video generation does not start with real footage. It synthesizes video pixels from scratch based on learned patterns.

It is not animation software in disguise. Traditional animation involves an artist specifying every movement, expression, and scene element. AI video generation learns patterns from massive amounts of existing video and can produce new video that follows those patterns without an artist specifying each element.

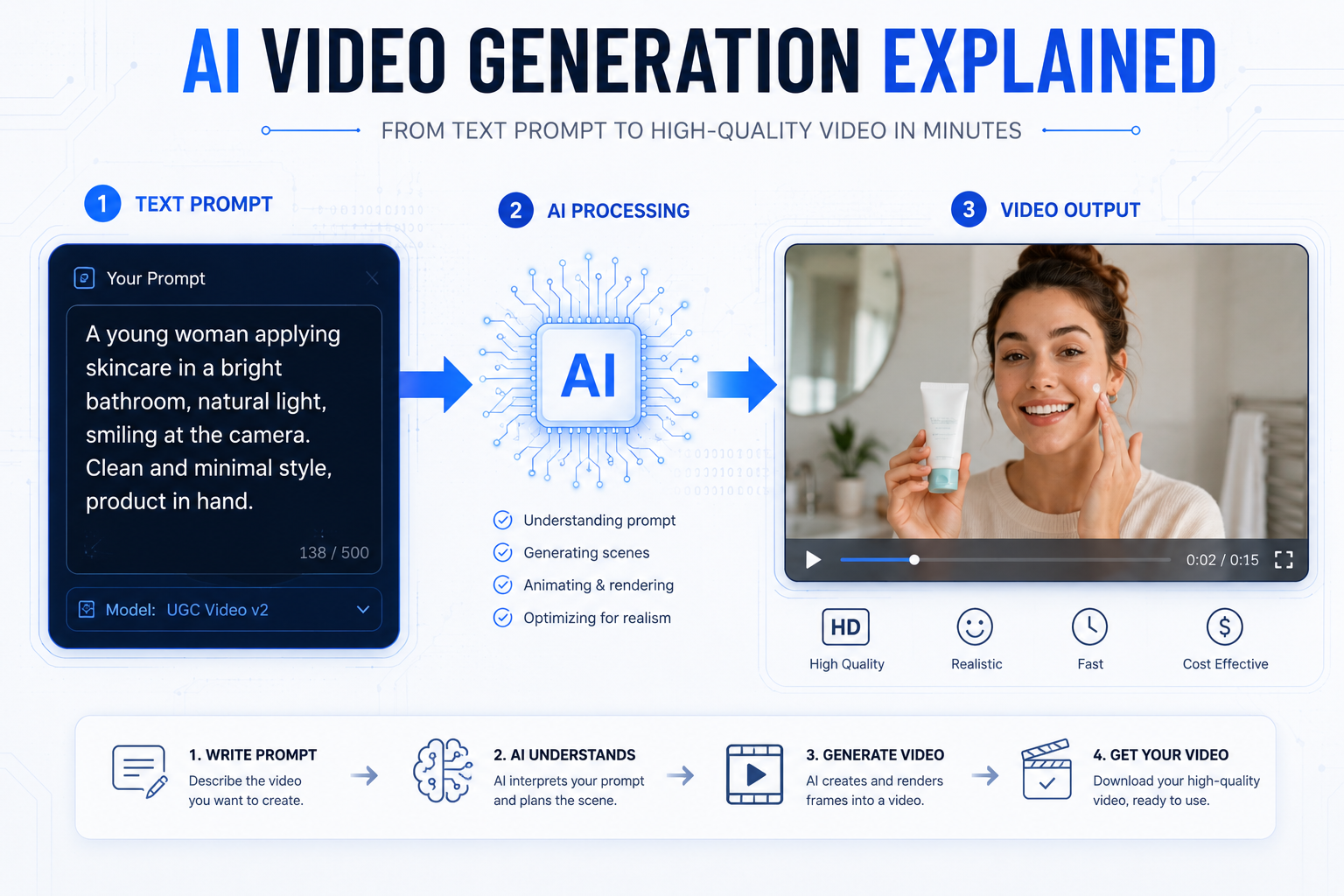

What AI video generation actually is: a statistical prediction process. The model has learned, from watching billions of video frames, what video looks like — how motion works, how lighting changes, how human faces move when speaking, how objects interact with environments. When you give it a prompt, it generates video that matches the statistical patterns of what video with those characteristics looks like.

The Core Technology: Diffusion Models Extended to Time

Most state-of-the-art AI video generators in 2026 are built on diffusion models. This is the same underlying technology that powers image generators like DALL-E, Stable Diffusion, and Midjourney.

Here is the basic idea of how a diffusion model works:

During training, the model is shown real images (or video frames) and learns how to destroy them — adding random noise progressively until the original image is unrecognizable static. Then it learns to reverse that process: given a noisy image, predict what a slightly less noisy version of that image would look like.

Once the model has learned to do this well, you can start the process from pure random noise and repeatedly apply the "make it less noisy" prediction — and each step moves the output closer to a coherent image that matches your text description.

Video generation extends this to the time dimension. Instead of generating a single frame, the model generates hundreds of frames that are consistent with each other over time — meaning the same person looks the same in frame 1 and frame 300, objects do not teleport between frames, and motion looks physically plausible.

Temporal consistency is the hardest part of video generation and the main reason video models took longer to reach usable quality than image models. Generating a single convincing image is much easier than generating 300 frames that all make sense together.

How the Best Models Handle Human Faces and Speech

For advertising specifically, the most important capability in AI video generation is producing realistic human faces delivering speech. This is where the quality difference between models is most visible.

Generating a realistic talking human involves multiple systems working together:

Text-to-speech synthesis. Your script is converted to natural-sounding audio by a text-to-speech model. The best TTS systems in 2026 are remarkably good — they handle pacing, emphasis, and natural speech patterns well enough that the voiceover sounds human to most listeners.

Lip sync alignment. The AI avatar's mouth movements must be synchronized to the audio track, frame by frame. The models that handle this best — and Kling 3.0 and Seedance 2 are currently the leaders in this specific capability — analyze the phoneme sequence in the audio and generate matching mouth positions at each frame.

Expression and micro-movement. Realistic human video includes micro-movements: slight head tilts, blinks, shoulder movements, subtle changes in facial expression. Training these natural-seeming variations into a generated video is what separates "creepy AI face" from something that reads as a real person.

The UGC video generation pipeline on UGCAds handles all three automatically. You provide the script and avatar choice; the platform runs text-to-speech, generation, and lip-sync in a single workflow without you needing to manage the component systems.

What Makes Seedance 2, Sora 2, Kling 3.0, and Veo 3.1 Different From Each Other?

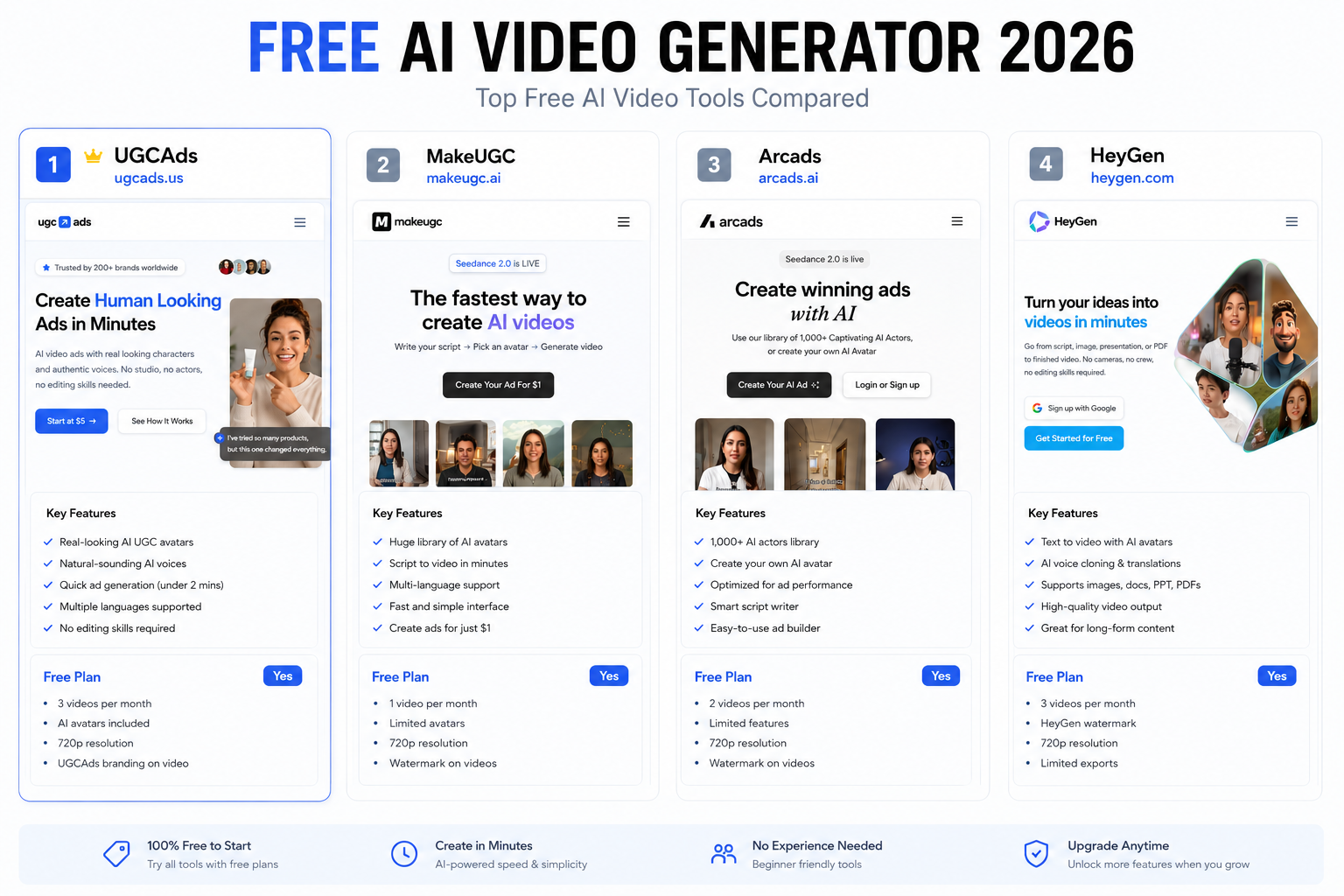

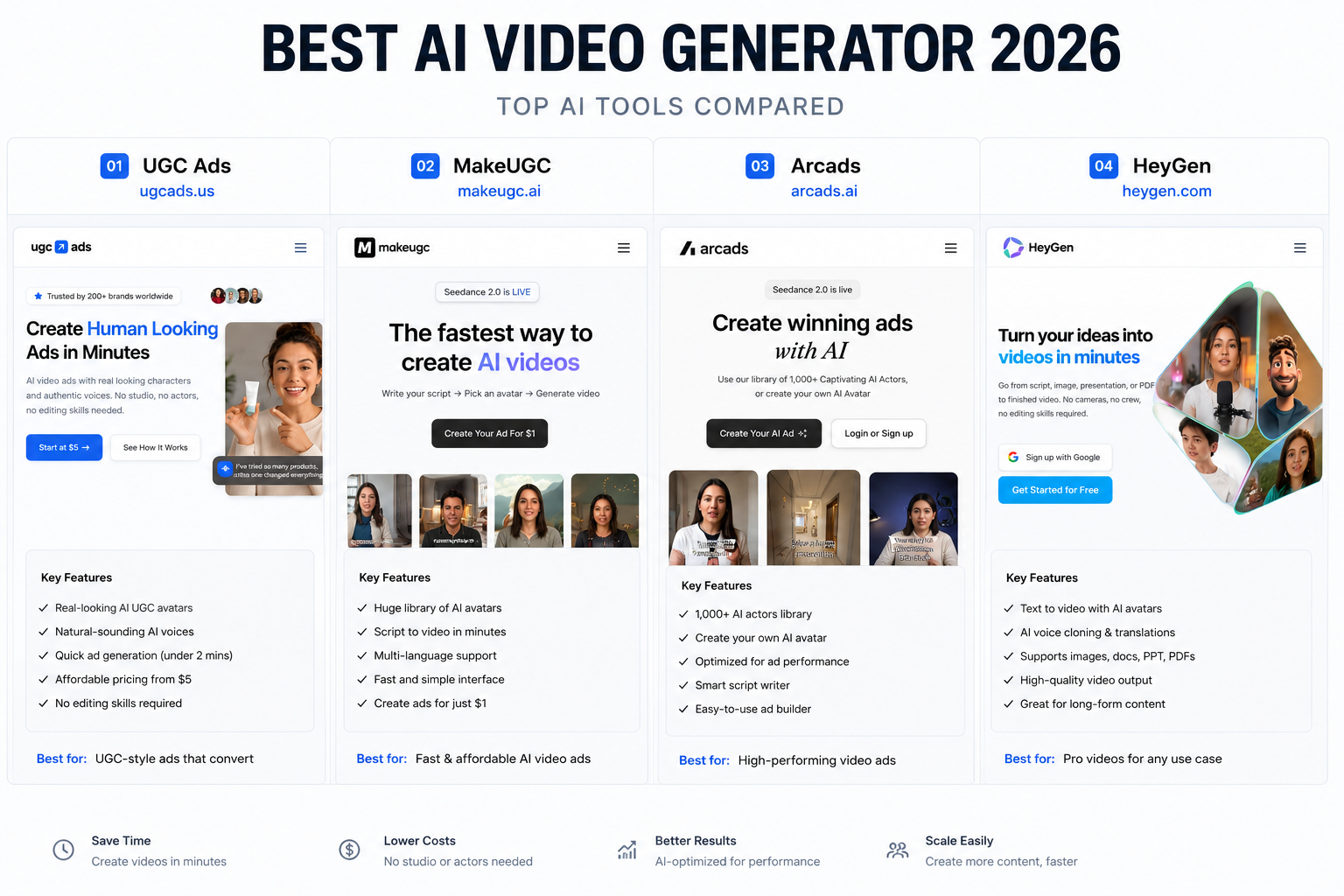

All four models available on UGCAds use diffusion-based architectures, but they differ in their training data, optimization targets, and practical strengths:

Seedance 2 (ByteDance)

ByteDance has a unique data advantage: TikTok. Seedance 2 was trained on an enormous corpus of short-form vertical video that includes a large proportion of authentic UGC content — people talking directly to camera, product demonstrations, casual lifestyle content. This is why Seedance 2 is particularly good at generating content that looks native to social media feeds. It also explains why it is the fastest of the four models: ByteDance optimized it for rapid generation to support the pace of social content production.

Max clip length: 15 seconds. Average generation time on UGCAds: under 90 seconds.

Sora 2 (OpenAI)

OpenAI trained Sora on what they describe as a broad internet video corpus, with a focus on physical plausibility and scene coherence. Sora 2's distinguishing characteristic is its ability to handle complex, multi-element scenes — multiple people, complex environments, dynamic camera movement — with a level of coherence that other models struggle to match. It also supports longer clips (up to 20 seconds), which gives it more narrative range.

The tradeoff is generation time. Sora 2 takes 2-5 minutes to generate a clip versus under 90 seconds for Seedance 2. For brands that prioritize quality over iteration speed, it is worth it. For high-volume testing, Seedance 2 is more practical.

Max clip length: 20 seconds. Average generation time: 3-4 minutes.

Kling 3.0 (Kuaishou)

Kuaishou's third-generation model is optimised for a specific problem that plagued earlier AI video models: realistic human-object interaction. Hands have historically been the weakest element in AI video generation — earlier models produced hands with the wrong number of fingers, objects that passed through each other, and physically implausible grip positions. Kling 3.0 has made the most progress on this problem of any model I have seen.

For e-commerce advertising where a person holding, applying, or demonstrating a product is central to the ad, Kling 3.0 produces the most convincing results. The quality on product interaction specifically is noticeably better than the other models.

Max clip length: 15 seconds. Average generation time: 1-3 minutes.

Veo 3.1 (Google DeepMind)

Google trained Veo on a different mix, with emphasis on cinematic and professionally-produced video. The result is a model with exceptional lighting realism, camera physics, and spatial depth — qualities that are more associated with film than with social media content. Veo 3.1 is the model I would use for a premium product hero shot where visual quality is the primary concern.

The constraint is clip length: 8 seconds maximum. That limitation makes Veo 3.1 better as a component in a longer video than as a standalone ad generator for most formats.

Max clip length: 8 seconds. Average generation time: 2-4 minutes.

What Are the Current Limitations of AI Video Generation?

Being honest about this helps set appropriate expectations and prevents the kind of disappointment that happens when people expect magic and get very-good-but-not-perfect.

Clip length. The maximum is 20 seconds with Sora 2 and 15 seconds with most other models. This is not a hard technological limit — it is a current practical limit set by computational cost and training choices. Longer clips are coming, but today, any UGC ad longer than 20 seconds needs to be assembled from multiple generations.

Complex physical interaction. Kling 3.0 has improved this significantly, but generating a realistic scene of a person assembling, cooking, or engaging in complex physical tasks still produces occasional artifacts. Simple product demonstrations — applying, holding, pouring — work well. Complex multi-step physical interactions are harder.

Consistent specific faces. If you want a generated video to feature a recognizable specific person (for example, the same AI avatar in multiple videos that are clearly the same character), maintaining perfect consistency across separate generation sessions is not yet fully reliable. This is improving with avatar-based systems like UGCAds' avatar library.

Specific branded product integration. Getting a specific physical product — your exact packaging, your specific product colors — to appear correctly in an AI-generated scene is still a work in progress. AI product photography tools (the photoshoot feature on UGCAds) handle this well for still images. Full video integration of a specific product is harder.

Where Is AI Video Generation Heading?

I will be careful here because this technology moves fast enough that confident predictions often look silly within six months. But based on the trajectory I have watched:

By end of 2026, I expect real-time or near-real-time generation for standard formats — under 10 seconds per clip rather than 90 seconds or 4 minutes. The computational efficiency improvements happening at the model level and the infrastructure level are both moving fast.

Longer consistent clips — 60+ seconds — are likely achievable with the leading models by mid-2027, based on published research directions from OpenAI and Google.

Reliable product integration from a reference image is the capability I am most watching. For e-commerce advertising, being able to generate a realistic scene featuring your specific product from a product photo would eliminate the last major advantage traditional photography has over AI generation. This capability is getting closer.

If you want to see what the current state of the art looks like for UGC ad generation, you can generate a test video on UGCAds for $5 and form your own view of where the quality is today. No need to take my word for it.

Also worth reading if this topic interests you: our comparison of the top AI video generators goes into the practical performance differences between models across real ad production use cases.